Toto

AI routing layer that optimizes LLM calls for cost and capability.

Product memo

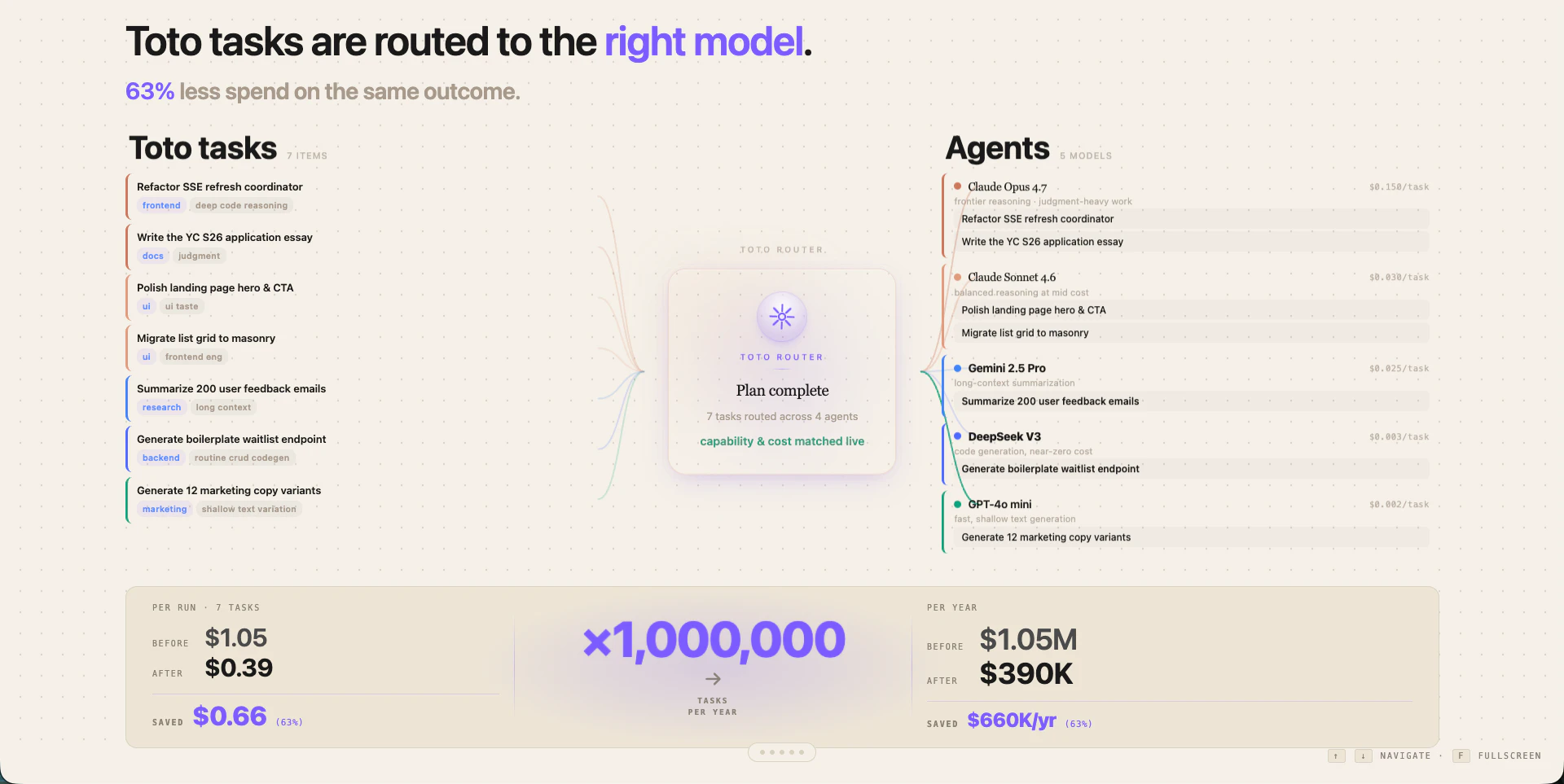

Targets teams overspending on large language model (LLM) calls by intelligently routing tasks to the most cost-effective model. It positions itself as the critical interaction layer for human-agent collaboration, managing state bidirectionally. The wedge is a significant, quantifiable cost reduction—up to 63% on LLM spend—by matching tasks to the cheapest capable model, appealing directly to efficiency-minded developers and operations teams.

For who

Teams overspending on LLM calls

Solves what

Intelligently routes tasks to the cheapest capable LLM for cost savings

- LLM task routing

- Cost optimization

- Real-time state management

In their own words

You are spending too much on the wrong tokens.

Wrong model on the wrong task — every prompt, every day. Toto routes each task to the cheapest capable model.

Same outcome at a fraction of the spend.

Commercial cues

Model

usage_based

Free tier

No

Trial

No

Toto router

CustomPer run pricing · Model routing

Pricing Strategy

Employs a usage-based pricing model, directly aligning its cost with the savings it delivers by routing LLM tasks to the most economical, yet capable, models.

- • Charges per-run, directly demonstrating cost savings on each LLM interaction.

- • Offers annual billing, incentivizing committed usage with potential discounts.

- • Provides custom pricing for enterprise clients, catering to high-volume, complex deployments.

Operator context

Founded

May 2026

HQ

United States

Social / footprint

Builder Strategy

- Strategy Type

- Niche Specialist

- Stage

- Pre Revenue

- Effort

- Solo Buildable

Targets teams overspending on LLM calls with a clear cost-saving wedge and real-time state management for human-agent collaboration.

Unfair Advantages

-

Unorthodox Pricing Focus on per-run cost savings is a direct attack on incumbent LLM API pricing models.

-

Proprietary Data Proprietary routing logic to match tasks to cheapest capable models

Builder Lesson

Demonstrate clear, quantifiable cost savings (63%) upfront to overcome initial LLM integration inertia.

Full Reasoning

Wins by directly attacking the most painful cost axis of LLM usage with a routing layer that promises significant savings. The real wedge isn't just cost, but also unified state management for complex human-agent workflows. For other builders in the AI infrastructure space, the lesson is clear: identify a single, painful incumbent cost and offer a credible, quantifiable reduction. Don't just build a feature; build a financial advantage.

About Toto Expand

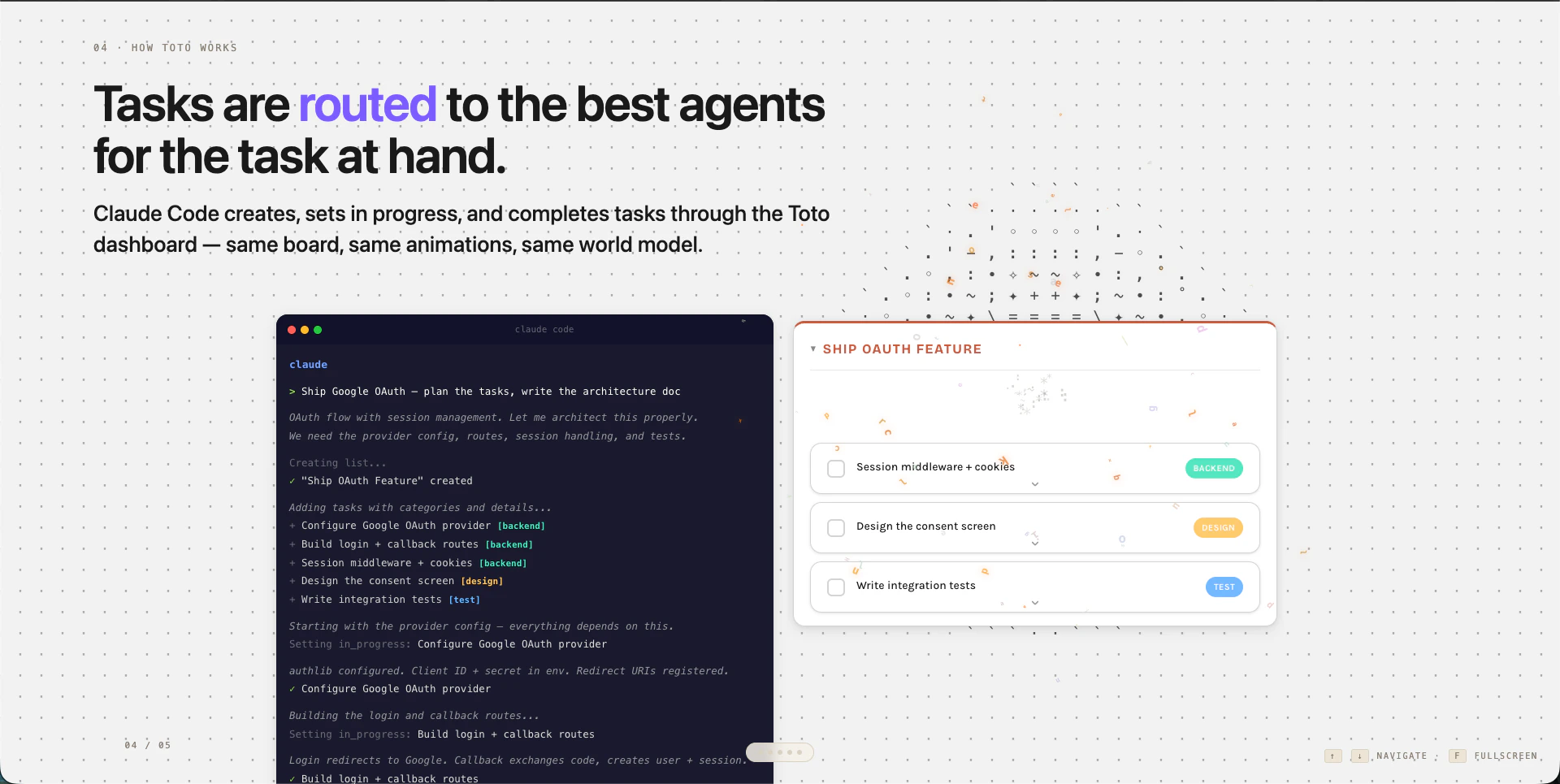

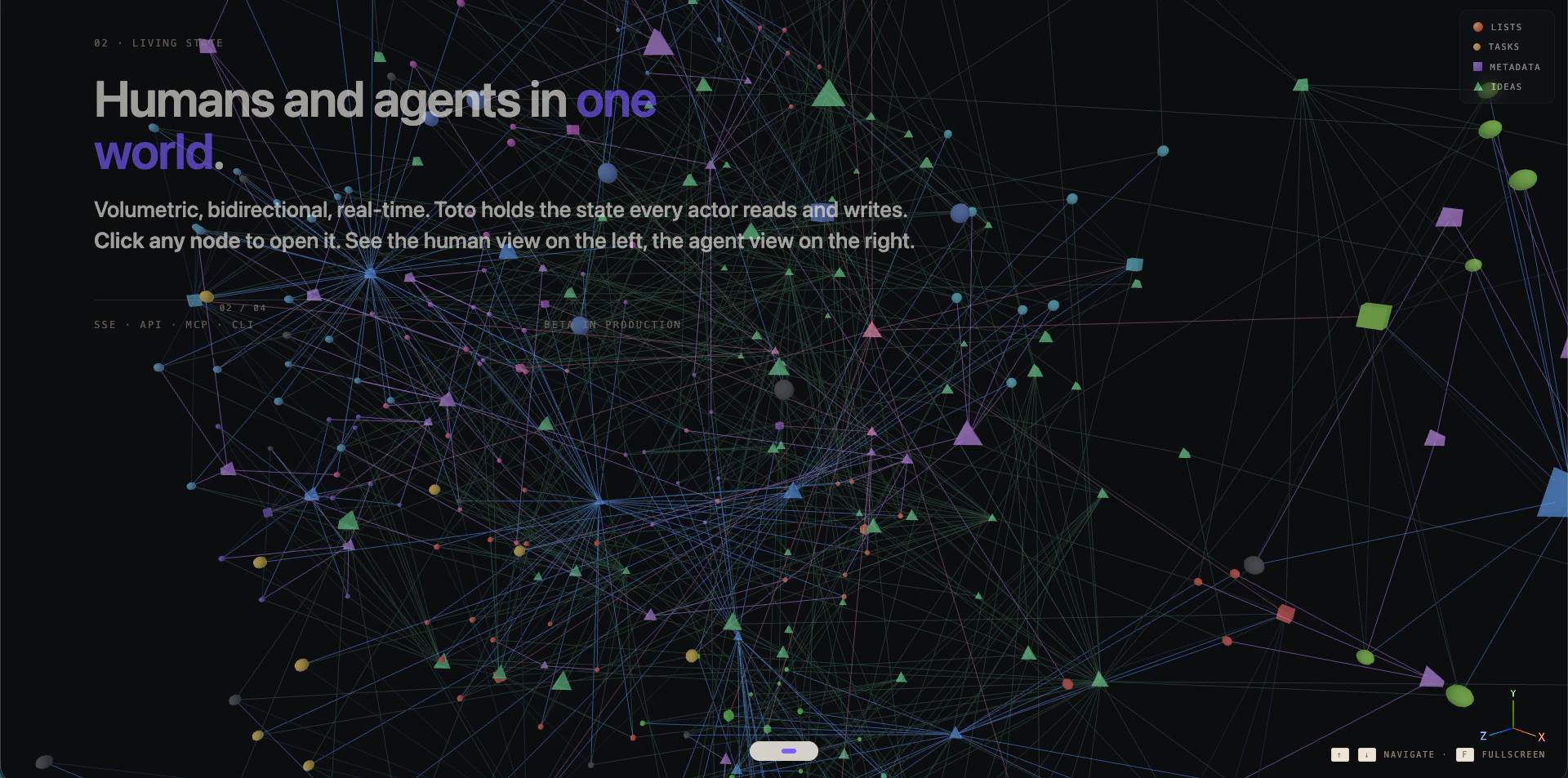

Toto is an AI routing layer designed for teams grappling with escalating large language model (LLM) costs. It acts as an intelligent intermediary, dynamically routing each LLM task to the most cost-effective model that can still meet the required capability. This approach allows organizations to achieve the same outcomes at a fraction of their current spend, effectively commoditizing LLM access by abstracting away the underlying provider.

Beyond mere cost savings, Toto also provides real-time state management and a human-agent collaboration interface, making it easier to build complex, multi-step AI workflows. For developers and operations teams, it offers both API and CLI access, integrating seamlessly into existing infrastructure. The core philosophy behind Toto is simple: why pay more when you can get the same quality for less? It's a strategic tool for any company looking to maximize its AI budget without compromising on performance.