Adaptive inference API that fixes LLM inaccuracies and continuously improves models.

Product memo

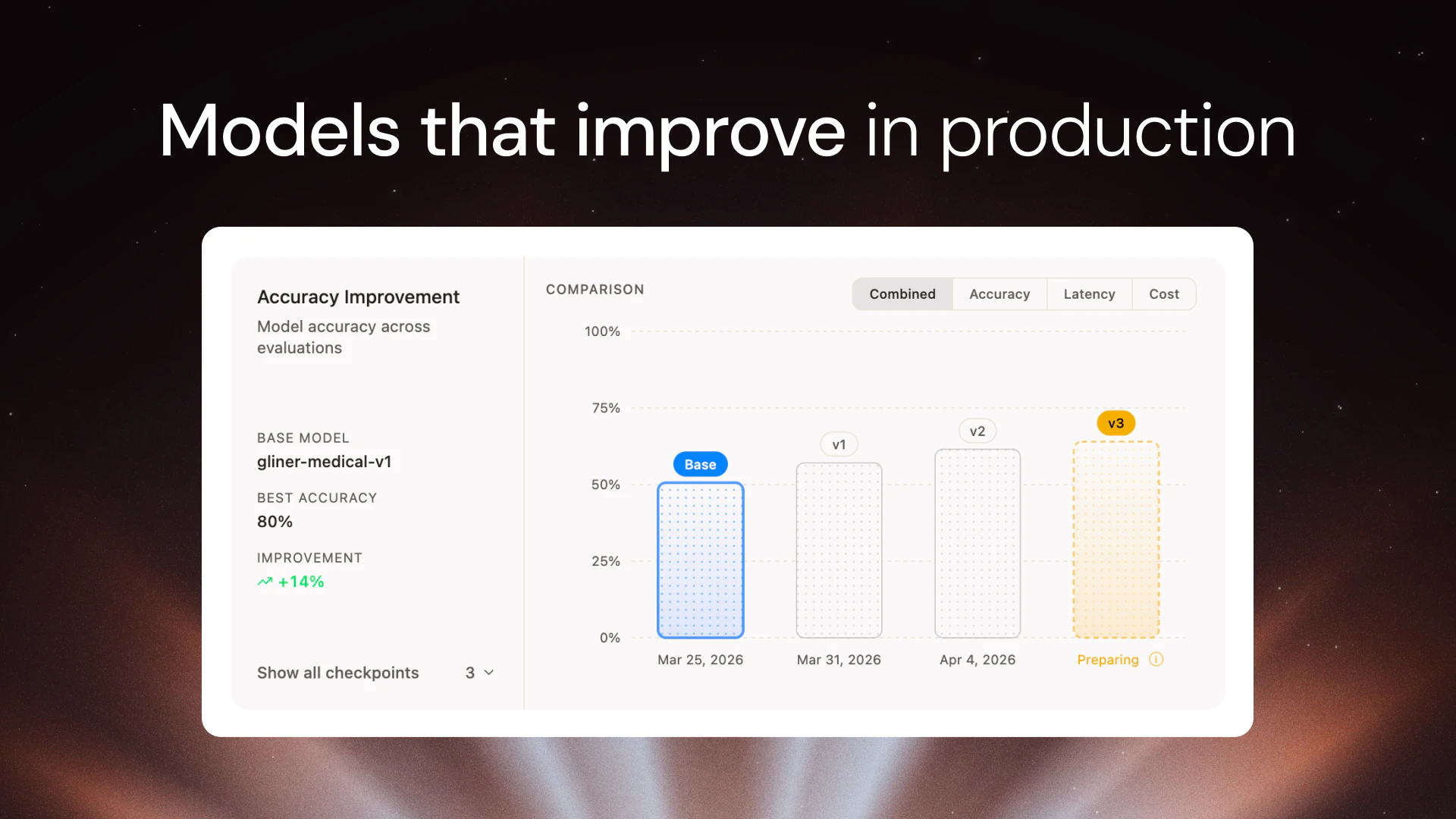

Targets developers and teams deploying large language models, offering an adaptive inference API that learns from production traffic. Its wedge is automated model retraining and deployment, significantly reducing the need for dedicated ML engineers. This positions it as a critical layer for improving existing LLM deployments, particularly open-source models, by making them "smarter" over time.

For who

Developers and teams deploying LLMs

Solves what

Fixing LLM inaccuracies and improving models with adaptive inference

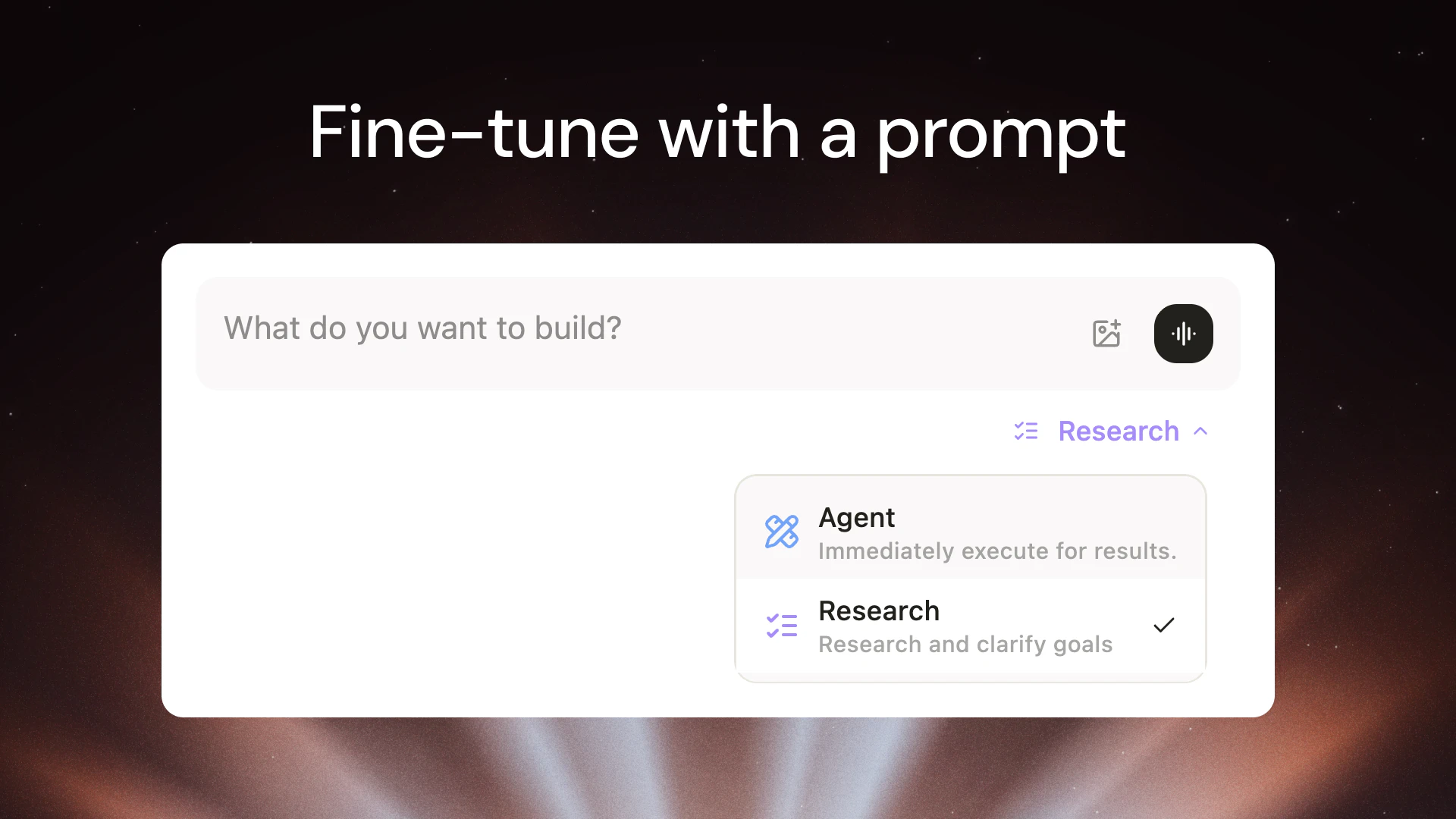

- Adaptive inference API

- Fine-tuning for 30+ models

- Automated model improvement

In their own words

Your LLM is wrong sometimes. Fix it.

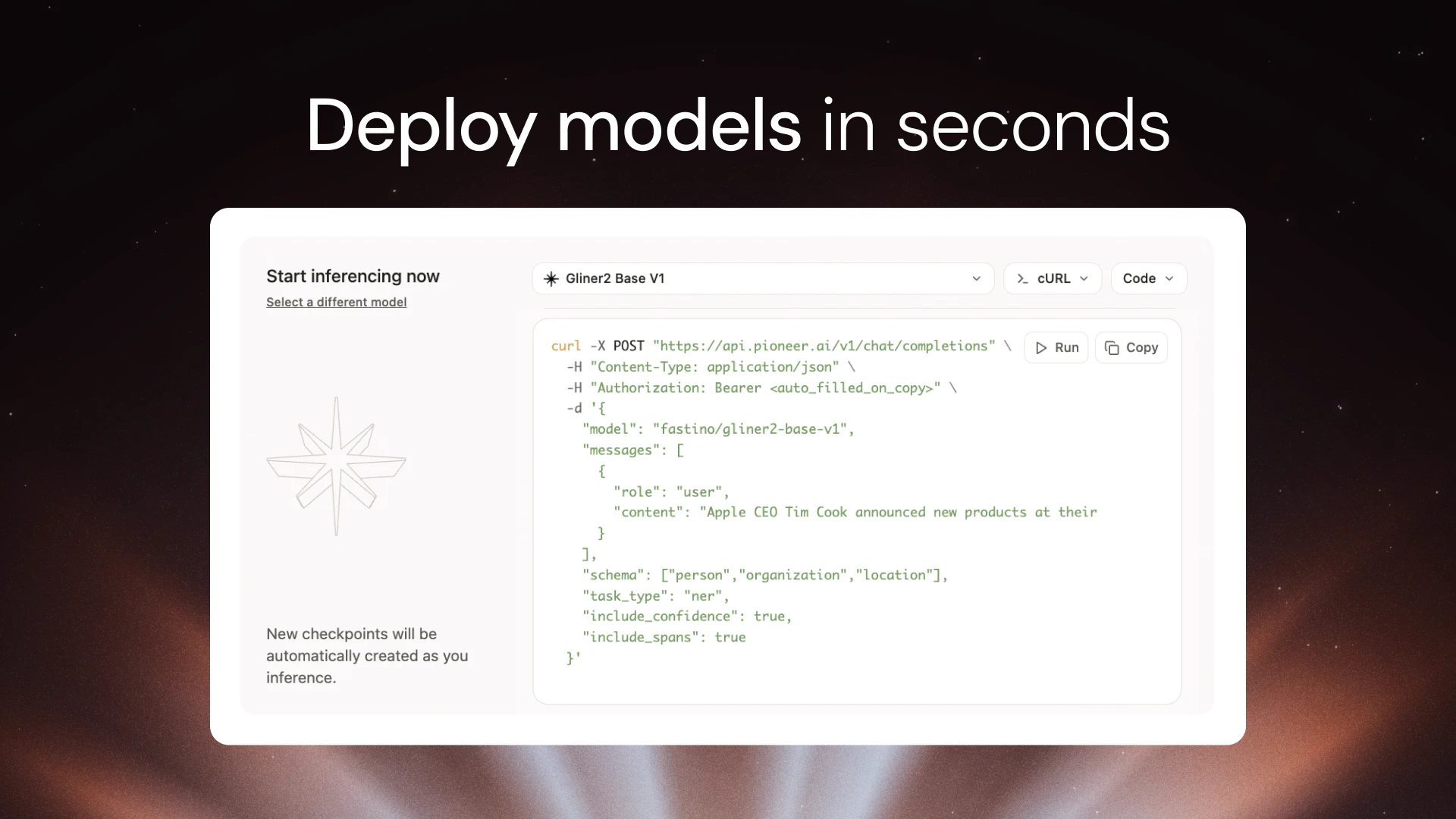

Inference API for 30+ models, including Opus 4.7 and GPT 5.5, that actually learns from your production traffic and gets smarter every week

An inference API that learns its job. An inference endpoint that learns its job.

Commercial cues

Model

subscription

Free tier

Yes

Trial

No

Free

Inference API

Pro

PopularEverything in Free · Higher rate limits · Downloadable model weights

Research

Everything in Pro · Deep Research mode · Multi-hour experiment tracking

Enterprise

CustomPricing Strategy

Employs a freemium model with a generous credit, designed to hook developers before scaling to tiered subscriptions based on features and research capabilities.

- • Offers a free tier with a $75 usage credit, allowing developers to experiment without immediate cost.

- • Provides promotional free inference until August 2026, creating a long runway for adoption and integration.

- • Scales through tiered pricing (Pro, Research, Enterprise) that unlocks advanced features and higher usage limits for growing teams.

Operator context

Founded

Apr 2026

Tech stack

Social / footprint

and 4 more

Builder Strategy

- Strategy Type

- Niche Specialist

- Stage

- Pre Revenue

- Effort

- Complex Stack

Targets developers with LLM inaccuracies via an adaptive inference API that learns and improves models automatically.

Unfair Advantages

-

Proprietary Data Adaptive inference learns from production traffic, creating a unique model improvement loop.

-

Unorthodox Pricing Promotional free inference until Aug 2026 defers revenue for adoption.

Builder Lesson

Offer a generous free tier with a future date for paid overages to drive adoption.

Full Reasoning

Wins by attacking the pervasive problem of LLM inaccuracies with an adaptive inference API that automates model improvement. The core wedge is the 'adaptive inference' itself, which learns from production traffic and retrains models quietly, dramatically reducing ML engineer overhead. This approach makes existing LLMs smarter over time, especially open-source models. Builders should focus on automating core improvement loops within their product to reduce friction and deliver continuous value to users, turning a complex problem into a set-and-forget solution.

About Pioneer Expand

Pioneer AI provides a sophisticated adaptive inference API designed for developers and teams deploying large language models. This platform tackles the common challenge of LLM inaccuracies by learning from real-time production traffic and automatically retraining models. It's built to simplify the complex process of model optimization, allowing users to deploy models in minutes and benefit from continuous improvement without extensive manual intervention.

The core value proposition of Pioneer AI lies in its ability to make LLM deployments, especially those using open-source models, inherently smarter and more reliable over time. By automating the fine-tuning and retraining process, it significantly reduces the operational burden on machine learning engineers. This approach allows teams to focus on application development rather than the intricacies of model maintenance, positioning Pioneer AI as a crucial tool for anyone looking to enhance the performance and accuracy of their LLM-powered applications.