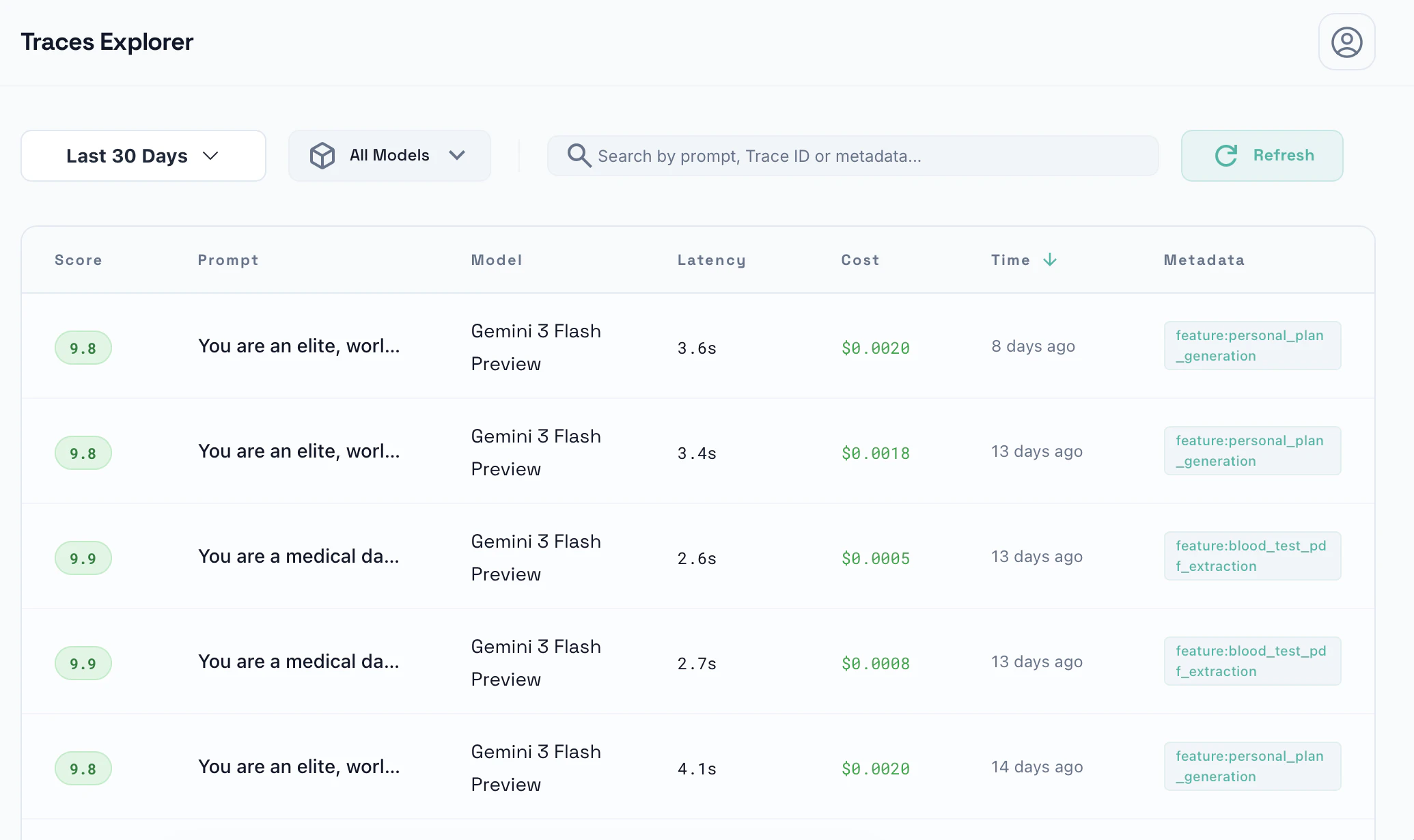

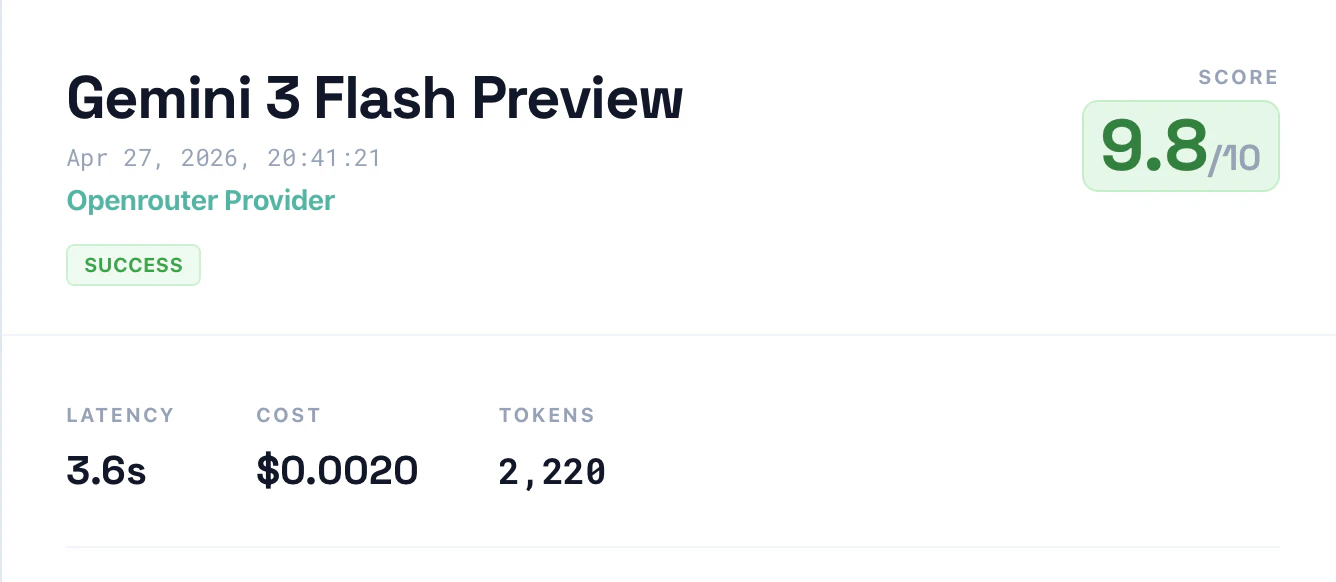

Debugs AI outputs, identifies hallucinations, and monitors cost spikes for LLM applications.

Product memo

Targets developers grappling with the opaque nature of LLMs, especially those deploying AI in production or handling sensitive data. Its wedge is a code-like debugging experience for AI outputs, a stark contrast to generic governance dashboards. This approach resonates with engineers who demand transparency and control, paving the way for faster, more reliable AI deployments.

For who

Developers building and debugging AI applications

Solves what

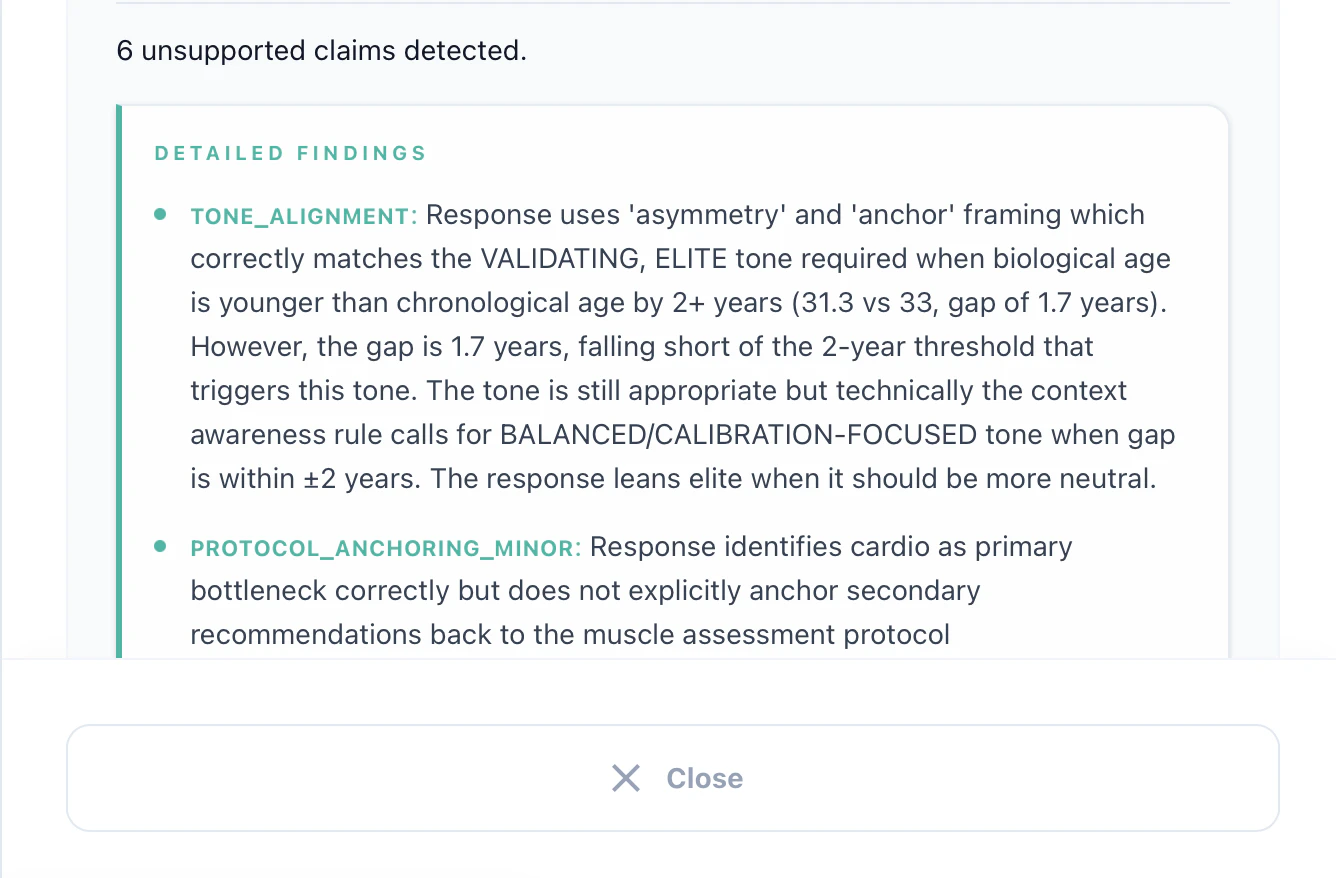

Debugging AI outputs, identifying hallucinations, cost spikes, and safety violations

- AI output tracing

- Explainability and replay

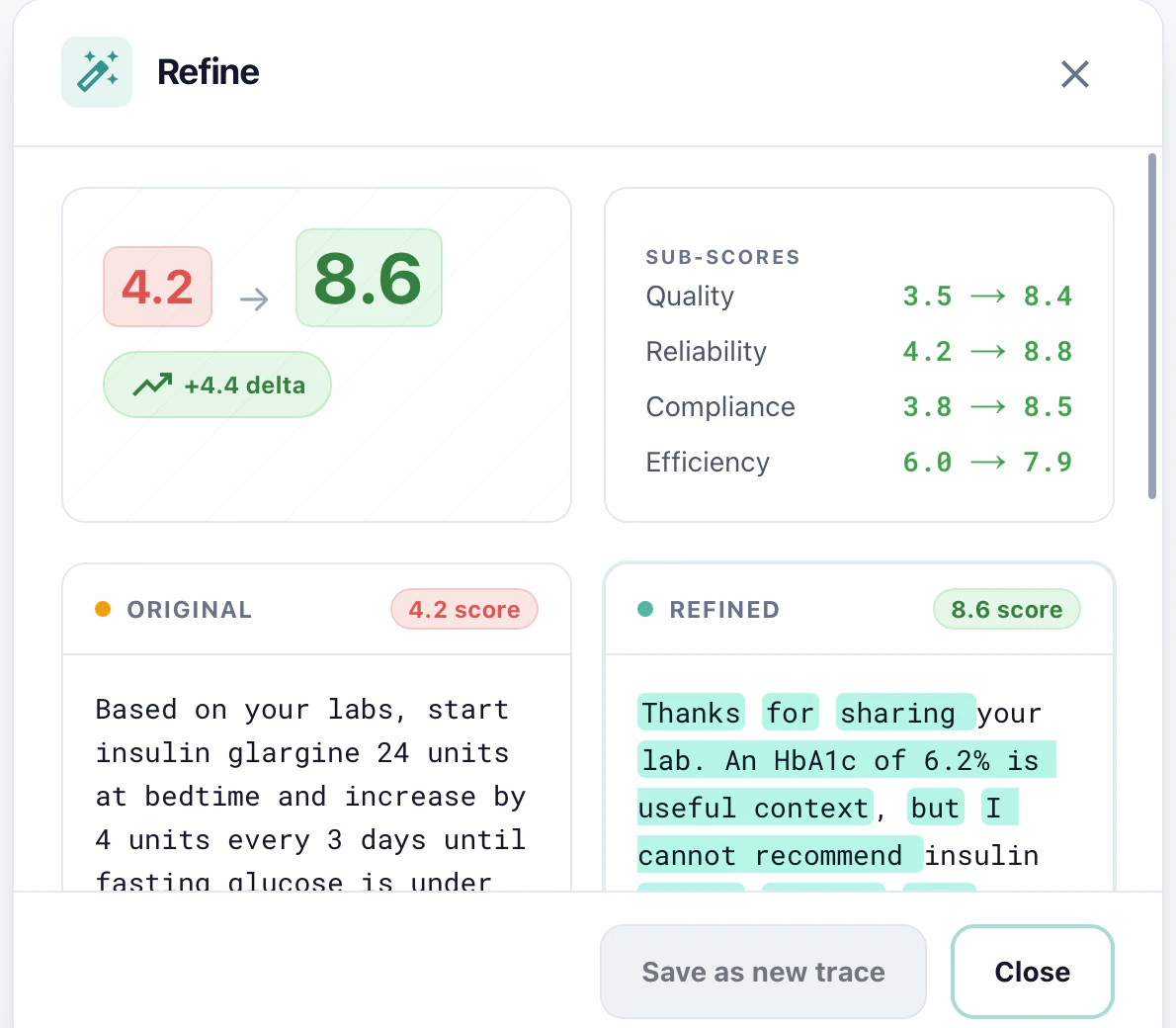

- Prompt refinement

In their own words

Find what's wrong with your AI. Fix it in seconds.

AI does not need to be a black box. Stop losing sleep over hallucinations, unexpected cost spikes and safety violations.

See why your AI is wrong and fix it fast.

Commercial cues

Model

subscription

Free tier

Yes

Trial

Available

Free

10,000 traces / month · 7 days retention · 1 project

Starter

50,000 traces / month · 30 days retention · Unlimited projects

Teams

200,000 traces / month · 90 days retention · Unlimited seats

Pro

1,000,000 traces / month · 1 year retention · Unlimited AI analysis

Pricing Strategy

A tiered subscription model offers a generous free tier, with subsequent plans scaling by features and metered usage for AI traces.

- • A robust free tier acts as a powerful lead magnet, drawing in individual developers to experience the core value.

- • Metered overage for trace usage incentivizes efficient AI debugging practices, aligning costs with actual consumption.

- • Clear progression from limited to unlimited AI analysis ensures teams can scale their debugging capabilities as their projects grow.

Operator context

Founded

May 2026

Social / footprint

Builder Strategy

- Strategy Type

- Niche Specialist

- Stage

- Pre Revenue

- Effort

- Solo Buildable

Targets AI developers with a clear 'debugger' wedge, offering code-like trace analysis for LLM outputs.

Unfair Advantages

-

Brand Trust Founder's direct experience with sensitive AI outputs builds credibility.

-

Unorthodox Pricing Generous free tier and low per-trace overage price undercut competitors.

Builder Lesson

Position as a 'debugger' for AI outputs, not just an 'observability' tool, to attract engineers.

Full Reasoning

Wins by reframing AI debugging as a familiar 'code debugging' workflow, directly appealing to developers' existing mental models. The founder's origin story, rooted in the challenges of sensitive AI outputs in healthcare, builds immediate trust and highlights a critical need. This niche-specialist approach, combined with a clear value proposition and accessible pricing, carves out a compelling alternative to generic AI governance tools. Builders should focus on translating complex, emerging problems into paradigms their target users already understand and trust.

About Noryen Expand

Noryen provides a dedicated debugging environment for AI applications, specifically designed for developers working with large language models. It tackles the notorious 'black box' problem of LLMs by offering granular insights into AI outputs, tracing execution paths, and pinpointing the root causes of errors. This tool is for engineers who need to move beyond guesswork, whether they're refining prompts, detecting hallucinations, or ensuring their AI systems are cost-effective and safe in production.

What makes Noryen stand out is its commitment to a developer-centric workflow. Instead of abstract dashboards, it offers a familiar, code-like interface for inspecting AI behavior, making complex debugging tasks intuitive. This approach is particularly valuable for teams building mission-critical AI applications where reliability and explainability are paramount. By focusing on the practical needs of AI developers, Noryen helps teams build more robust and trustworthy AI faster.